How so? What would you have done?

Assuming the data base (and I use the term loosely) was chosen for speed, I would have used a database that used Sets. I worked on an application were a relational database would have been too slow and it used a Set based data base (whose name I can no longer remember) that had all the convenience and safety one would expect from a modern construct.

The only issue I have with that large number of small files is the automated backup of my files on all of my network computers to a single NAS. It takes forever and a day for the NAS to process all of these files.

Try a restore from Dropbox, never again…

Are you referring to set-based approaches vis-à-vis procedural? Doesn’t this still refer to SQL databases? Roon uses a key-value, i.e., noSQL, database to store data. A benefit of these databases is performance.

As for backup, if you have the correct tools, many files, and folders is a non-issue. I use two backup products, both lock free (block level and deduplication), that handle local backup in minutes. Even a cloud backup is relatively fast.

Since December, the Roon database as significantly reduced in size.

Wish I could tell you more or, at least, the name of the vendor.

I remember it was chosen, by people who knew what they were doing, for its speed and fail safe mechanisms, mechanisms evidently lacking in Roon’s database. Instead of tables, or many little files, it worked with sets that were accessed thru Boolean operations and with keys

It’s been almost 30 years and I no longer have many of those memories, thank the deities.

I think the Roon people know what they’re doing too. A relational database simply wouldn’t cut it with Roon. Furthermore, I think you are conflating database corruption caused by a failure on the host machine, e.g., disk errors, with database choice and its implementation.

Try backing up 1M 1KB files, then one 1GB file and see the difference. No matter what tools you’re using, you have to go through the filesystem. Metadata operations like opens and closes are expensive.

Sorry, a data base that gets corrupted seemingly as often as Roon’s does, is failing in its responsibilities.

That is one of the charters of databases, i.e. to be relatively immune to hardware failures, etc. Really, that’s what database checkpoints are for.

Not with block-level or Chunking backup methods. Both these methods use the file system, but are not aligned with file boundaries.

Sorry, I missed your earlier reference to block-level backup. Yes, those can be fast and don’t depend on the number or size of individual files, but they are not using the file system, so they work at volume level. You lose the ability to back up individual directories or filter out certain files. Regardless, I don’t see any reason for a persisted database to span that many files.

Incorrect on both counts.

I use block-level backup for my system volume (EFI and / [root]), and this can work at system, volume, or file level. For everything else, I use lock-free deduplication, including /home which is also on the / partition. This can “back up individual directories or filter out certain files”.

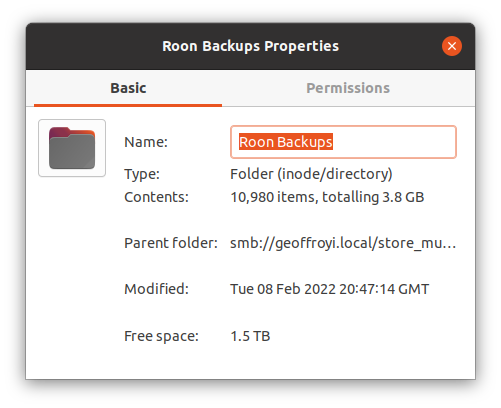

A fresh backup from Roon over my LAN took <5 m. Backing up that folder to a local drive took <2 m; to S3 would take longer, but only because I have lousy broadband upload speeds.

I’ve started a cloud backup and that indexed in about 7 minutes. Uploading will take another twenty, so all told ~30 m to back up Roon securely (encrypted) to cloud. Subsequent backups will take far less time.

I just copied a 3.8 GB file over my LAN and it took < 35s. Copying the same file from one HDD (two discs in RAID0) to an SSD took <10s.

Your point? Copying a single file and taking a database snapshot are not the same. Likewise, copying is ≠ to backing up.

Sure, I’ll say it again: copying 1M files 1K each is a lot slower than copying one 1GB file. Try to back up one 3.8 GB file instead of the Roon database and see what difference it will make for you.

Not when you’re backing up chunks. If 1% of your 4 GB database file changes, I’d only need to copy a few chunks, not the whole file. As I said, copying is not the same as backup. Use the correct tools, and all of this is irrelevant.

There’s also the initial backup, which picks up everything, and it also depends on how many of those tens of thousands of files Roon changes each time it backs up, so it’s not irrelevant. I’m sorry to criticize Roon, but if they just serialized the whole thing to one file, both my copying and your “block-level dedup” backup would be better off.

To be clear, what I quoted was the initial backup, i.e., the longest duration. Chunking isn’t concerned about file size… chunks are not the sames as blocks and don’t care for file boundaries.

Artist images are handled outside the db when Valence Art Director was introduced. That’s your reduction.