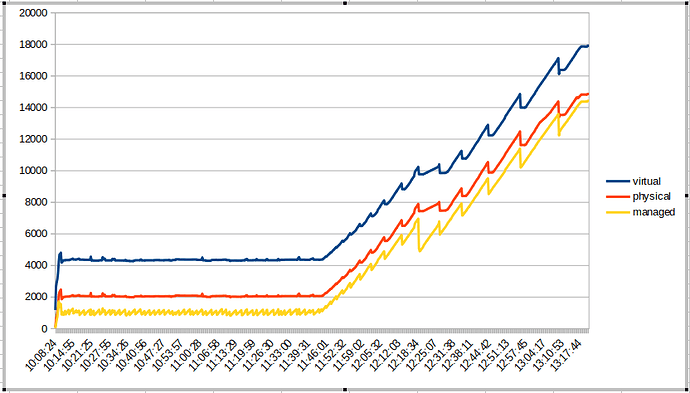

Plotted virtual, physical and managed on this one as reported by the Roon logs. Doesn’t show much other than the march towards memory exhaustion

I did do a search in the forums and found a support request from another user who seems to have seen the same issue as myself, but he was running in a VMWare host rather than directly on a physical server. Unfortunately there’s nothing in the forum post that can help me as the conversation appears to have gone over to direct messages.