What do you mean by “not Nyquist sampled”? Every digital signal is sampled and thus has a Nyquist limit strictly related to the sampling rate. Whether it’s natively sampled at that frequency or oversampled, or whether it’s represented by two or more levels, is not relevant. Oversampling is applied specifically to be able to extend the Nyquist range and populate it with unwanted DSP products - namely, that result from bit reduction - so it is very much a Nyquist range.

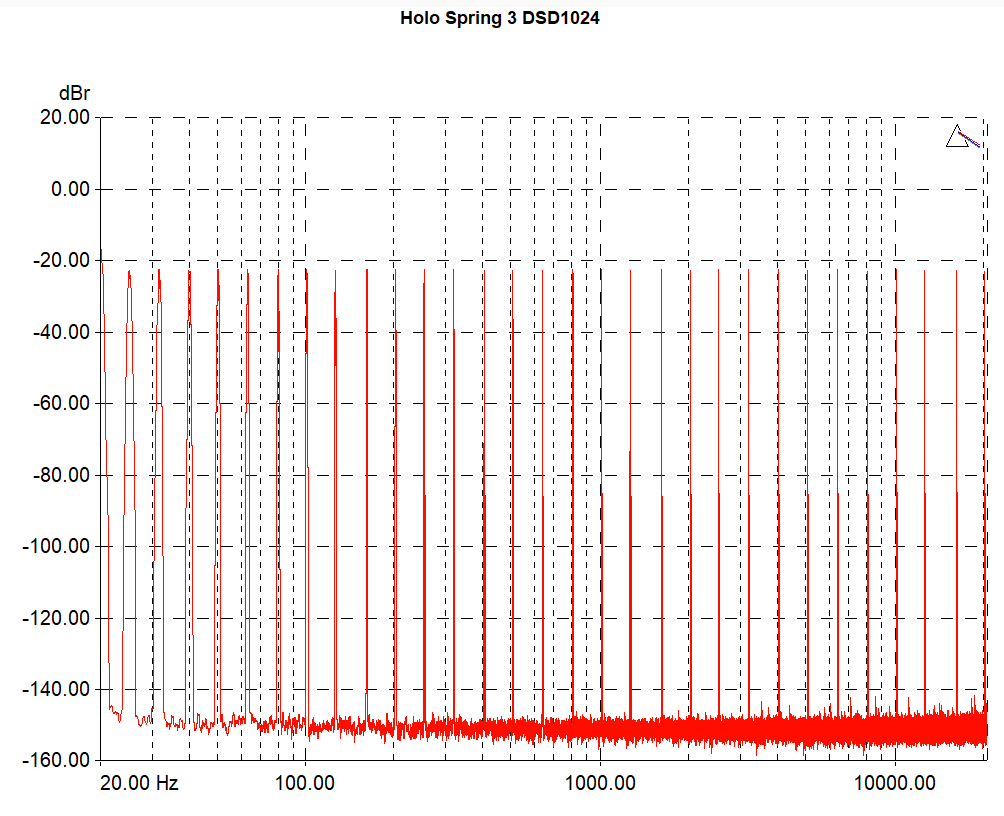

Regardless, where does the 5.6MHz figure come from in the context of DSD1024 anyway?

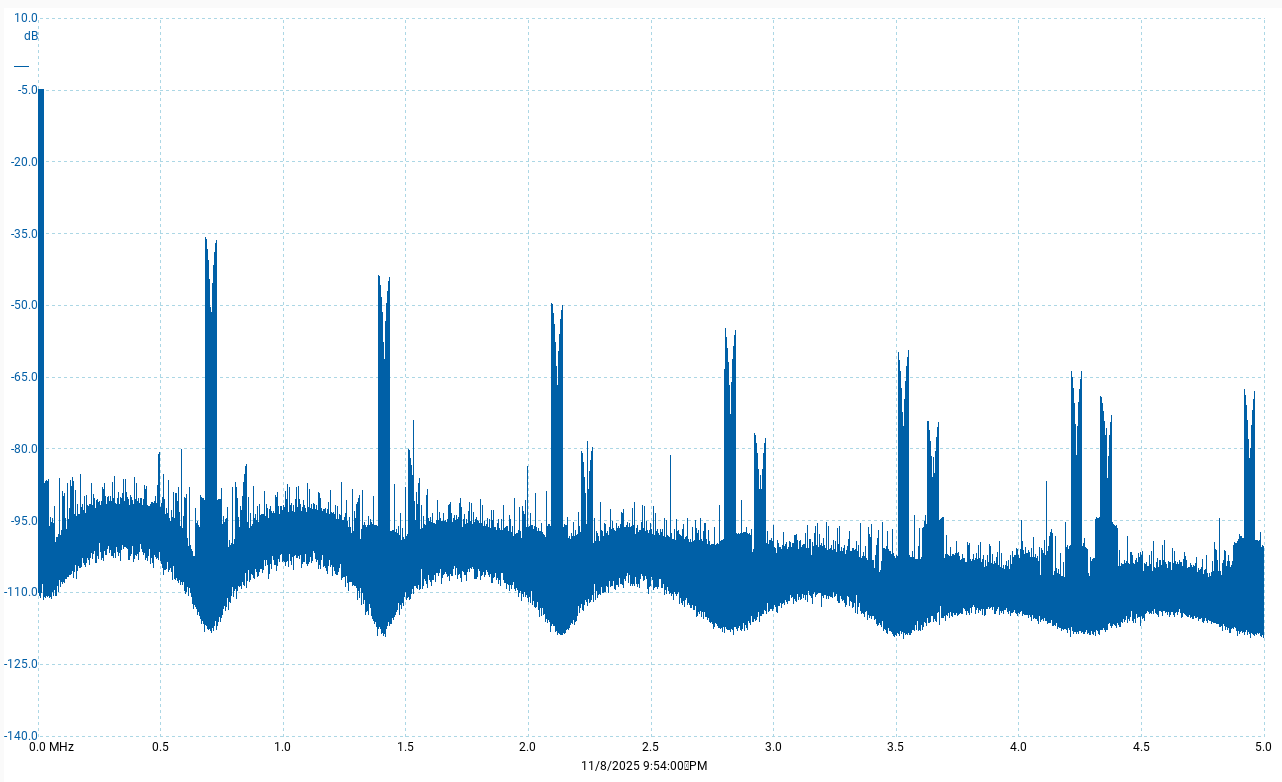

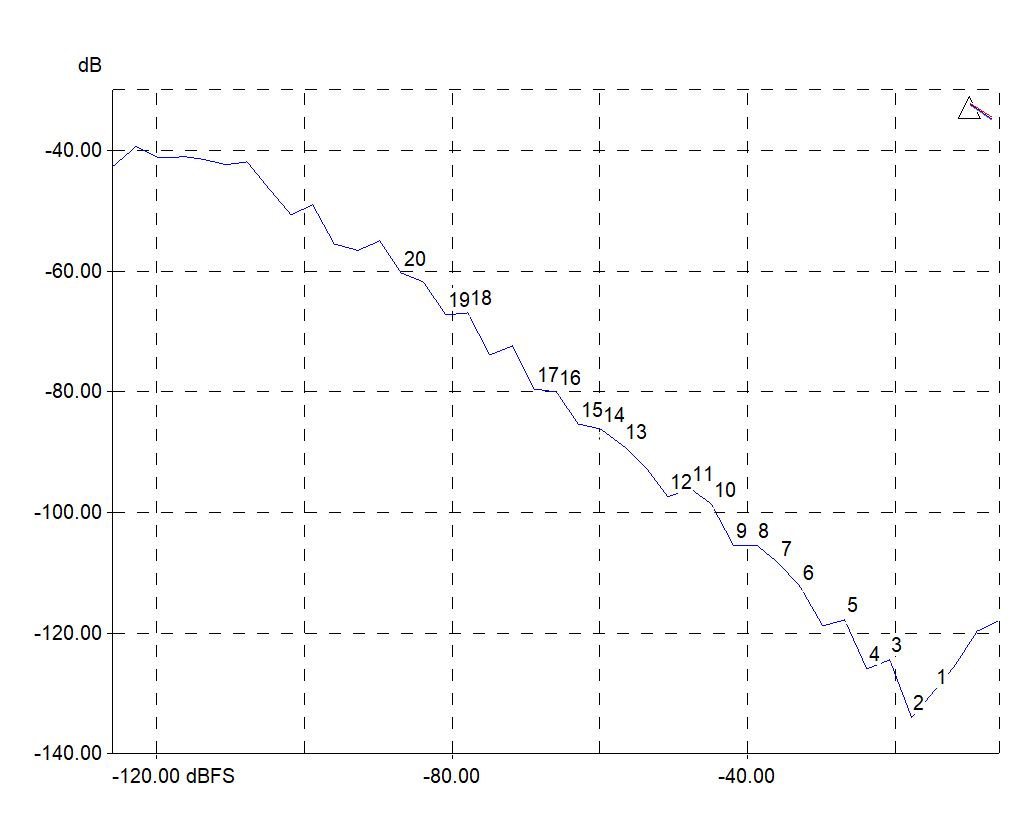

There’s no maybe, they are idle tones within fs/2, so that excludes periodic images. By definition, idle tones are solely the products of DSP and unrelated to the signal. You disagreed with the term “idle tone” and I responded. I did not say those were correlated; the other two frequencies I showed in the second graph are the ones correlated with the signal.

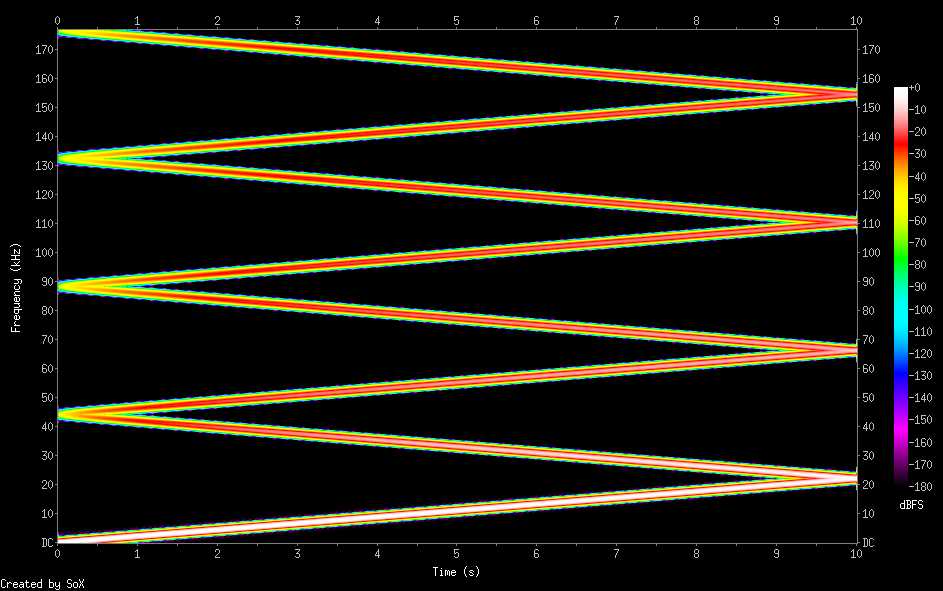

Why would I show an 8x bandwidth when the signal can’t carry anything over fs/2? Obviously, any extension beyond that is periodic and thus redundant.

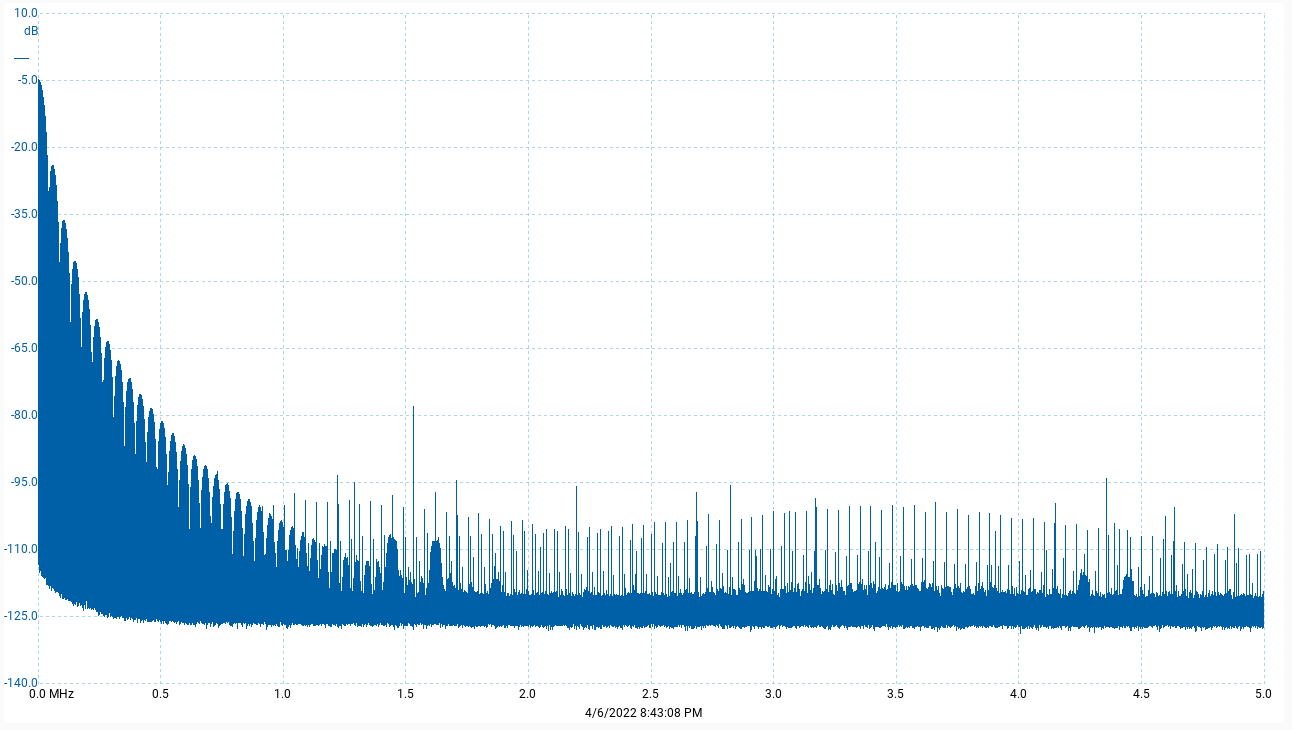

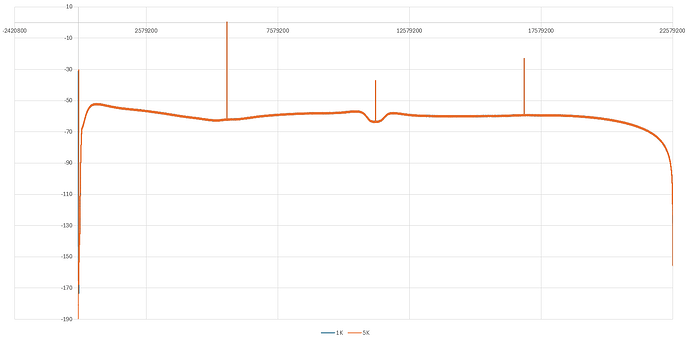

Once again, my graphs don’t show anything over fs/2, so there’s no redundancy there and thus should contain no periodic images. To make that clear, this is the full spectrum again - linear this time - with the upper axis limit set exactly to 22,579,200Hz, i.e. fs/2, a.k.a. the Nyquist limit, for DSD1024:

It’s clear the two idle tones are not periodic images, since one is smaller than the other.

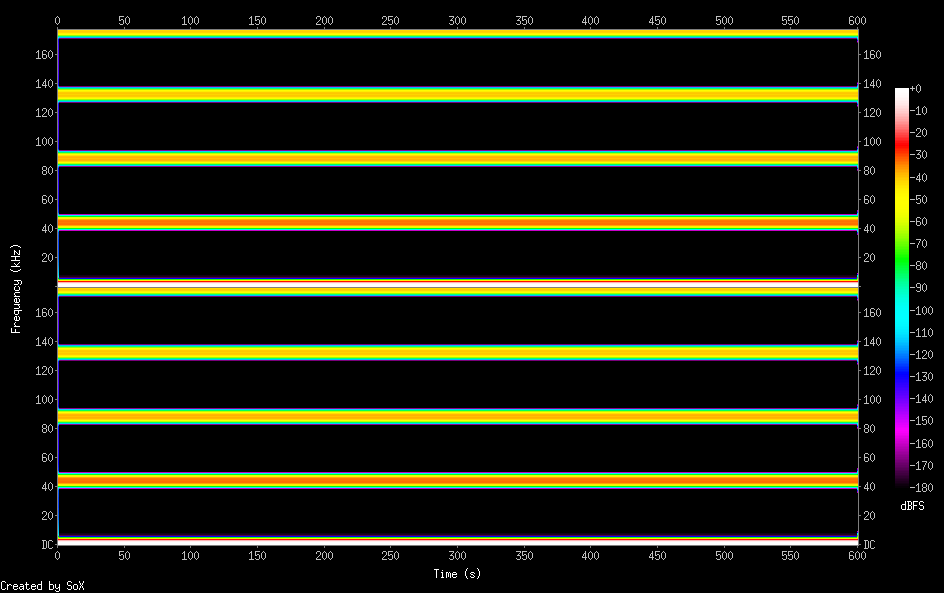

And as I mentioned earlier, that’s irrelevant for my point, since quantization noise is a digital concept and shows in the digital signal. Should it be filtered out during D/A conversion? Yes. Will it be audible? Most probably not. But correlation will always be present in the digital domain when you only have two levels, and it’s going to be present throughout the whole spectrum; noise shaping doesn’t move noise from one place to another, it just shapes it, so if it’s present in some regions, it’s also going to be present in the audible range to some extent.